Radeon Pro WX 8200 – AMD’s new high-end professional GPU may be power hungry and slightly off the pace in some applications, but its trump card is multitasking, which means you can render in the background and still get a fully responsive 3D viewport

It’s been two years since AMD launched its Radeon Pro WX family of GPUs, starting with the Radeon Pro WX 4100 & WX 5100, focused on 3D CAD and the Radeon Pro WX 7100 for entry-level GPU rendering and VR. Last year it extended this to the high-end with the Radeon Pro WX 9100, the first professional GPU to feature the company’s long-awaited Vega GPU architecture.

The Radeon Pro WX 9100 fell a little short of expectations. Our tests showed it generally sat somewhere between the Nvidia Quadro P4000 and Quadro P5000 but performed well in select workflows such as OpenCL-based ray trace rendering and immersive VR in DirectX applications.

This summer, AMD added a new high-end model to the fold, the Radeon Pro WX 8200. On paper, this GPU is very similar to the WX 9100, but it costs considerably less. At launch, AMD claimed that for real-time visualisation, virtual reality (VR) and photorealistic rendering, it offered the best workstation graphics performance for under $1,000. However, this bold claim was qualified in the footnotes, tested under select workflows including Adobe Premiere Pro, Autodesk Maya, Radeon ProRender and Blender Cycles.

Radeon Pro WX 8200 – specifications

The Radeon Pro WX 8200 is very similar to its older sibling, the WX 9100, both in terms of looks and specifications. Both are double slot GPUs with a thermal design power (TDP) of 230W, which is quite a lot for a desktop GPU. It means you’ll need a mid-range to high-end workstation, such as the HP Z4 or Dell Precision 5820 Tower, with a pretty hefty power supply and both a 6-pin and 8-pin external power connector. It has four mini DisplayPort outputs while the WX 9100 has six.

The Radeon Pro WX 8200 packs in 3,584 Stream processors and delivers 10.75 TFLOPs of Peak Single Precision (FP32) Performance. On paper this is around 88% of what the WX 9100 offers with 4,096 Stream Processors and 12.3 TFLOPs, but it costs considerably less. On scan.co.uk, it is currently going for £790 (ex VAT), whereas the WX 9100 will set you back £1,220 (ex VAT).

Nvidia might currently be the Usain Bolt of 3D graphics and the favourite in a flat race. But if you want to juggle circus balls, while powering down the back straight, our money would be on AMD

The big difference between the two GPUs is memory. The Radeon Pro WX 8200 has 8GB of fast HBM2 memory with ECC support, while the WX 9100 has double that at 16GB. This is a key consideration for GPU rendering, as memory plays a crucial role. It is also becoming much more important for VR and real time visualisation, particularly at 4K resolution and above. If you plan to multitask and work in 3D as you render a scene in the background, it’s even more critical and 8GB is likely to be too little. In saying that, the WX 8200’s High Bandwidth Cache Controller (HBCC) can help you work beyond the physical memory limits of the GPU by allocating a portion of the workstation’s system memory for it to use, but more on this later.

In terms of competition, the Radeon Pro WX 8200 is naturally pitted against the Nvidia Quadro P4000 as they have similar price points. On scan.co.uk, the P4000 is currently available for £675 (ex VAT), £115 less than the WX 8200.

The P4000 features 8GB of GDDR5 memory but is very different in terms of its physical packaging. It’s a single slot GPU and is much less power hungry. With a max power consumption of 105W, it also has the benefit of being compatible with an entry-level workstation, such as the HP Z2 Tower or Dell Precision 3630 Tower, and only needs one six-pin external power connector.

There’s also the Quadro P5000 (16GB GDDR5X), a double slot GPU with a max power consumption of 180W, which comes in at £1,399 (Ex VAT) on scan.co.uk.

For the purpose of this review we put the WX 8200 up against the P4000 and P5000 and also the WX 9100 and the WX 7100, AMD’s mid-range single slot pro GPU, which has 8GB of GDDR5 memory and costs £521 (Ex VAT).

Both Nvidia GPUs are set to be replaced imminently with the Turing-based Quadro RTX 4000 (8GB GDDR6) and Quadro RTX 5000 (16GB GDDR6), which should offer significant performance increases. The RTX 5000 is currently available on pre-order at Scan.co.uk for £1,832 (Ex VAT). Pricing for the RTX 4000 has not yet been released.

Radeon Pro WX 8200 – testing

Our test machine is a typical mid-range workstation with the following specifications.

• Intel Xeon W-2125 (4.0GHz, 4.5GHz Turbo) (4 Cores) CPU

• 32GB 2666MHz DDR4 ECC memory

• 512GB M.2 NVMe SSD

• Windows 10 Pro for Workstation

For AMD GPUs we used the 18.Q4 driver. For Nvidia GPUs, the 416.16 driver. To cater to our key audience of designers, engineers, design visualisers and architects – we focussed on five professional applications in the areas of 3D CAD, real time visualisation, VR and ray trace rendering. The applications used a variety of APIs, including OpenGL, DirectX and OpenCL. Wherever possible we used real world design and engineering datasets.

Interactive 3D

For real time visualisation, frame rates were recorded with FRAPS using a 3DConnexion SpaceMouse to ensure the model moved in a consistent way every time. We tested at both FHD (1,920 x 1,080) and 4K (3,840 x 2,160) resolution.

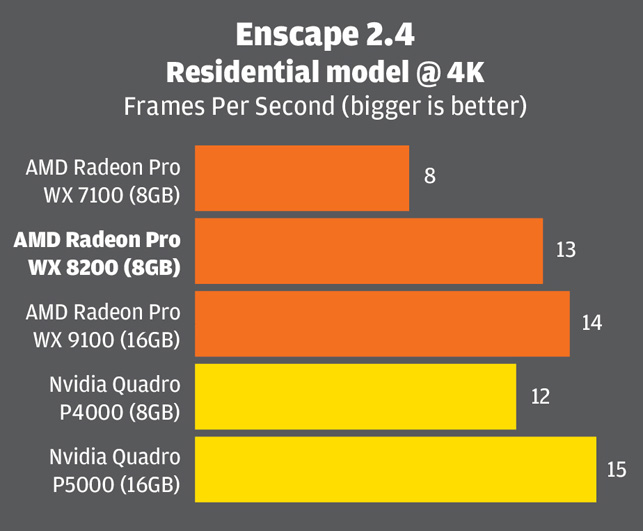

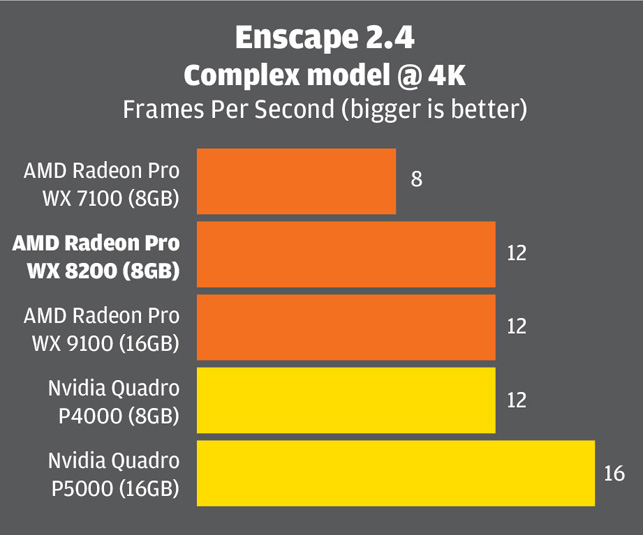

Enscape 2.4

Enscape is a real-time viz and VR tool for architects that uses OpenGL 4.2. It delivers very high-quality graphics in the viewport and uses elements of ray-tracing for real time global illumination.

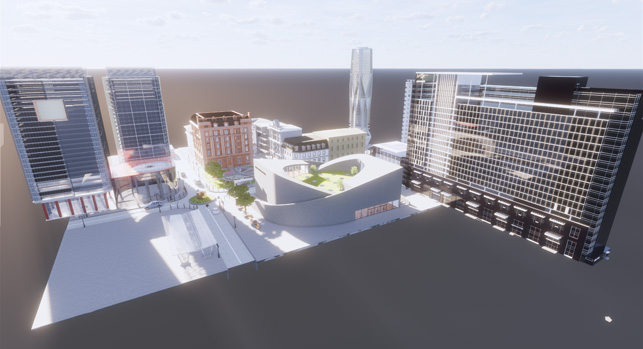

Enscape provided two real world datasets for our testing – a large residential building and a colossal commercial development. The GPU memory requirements for these models are quite substantial. The residential building uses 2.8GB @ FHD and 4.5GB @ 4K, while the commercial development uses 5.5GB @ FHD and 6.9GB @ 4K. This was fine for our testing, as all the GPUs feature a minimum of 8GB, but it emphasises the point we made earlier about the importance of GPU memory. If using multiple applications at the same time, especially a GPU renderer, you may quickly find yourself running out.

In the most part the WX 8200 stood shoulder-to-shoulder with the WX 9100 and the P4000, although the P4000 had a slight lead when testing at FHD resolution. The Quadro P5000 topped the charts, although it is significantly more expensive. The WX 7100 came bottom by quite a long way.

At this point it’s important to note the relevance of Frame Per Seconds (FPS). Generally speaking, for interactive design visualisation you want more than 24 FPS for a fluid experience. When testing at 4K resolution, all of our GPUs delivered 16FPS or under, which is not ideal. However, as with most applications, you can dial down the visual quality in Enscape to increase performance. For example, when set to draft, which still gives very good visual results, we achieved 25FPS with the WX 8200.

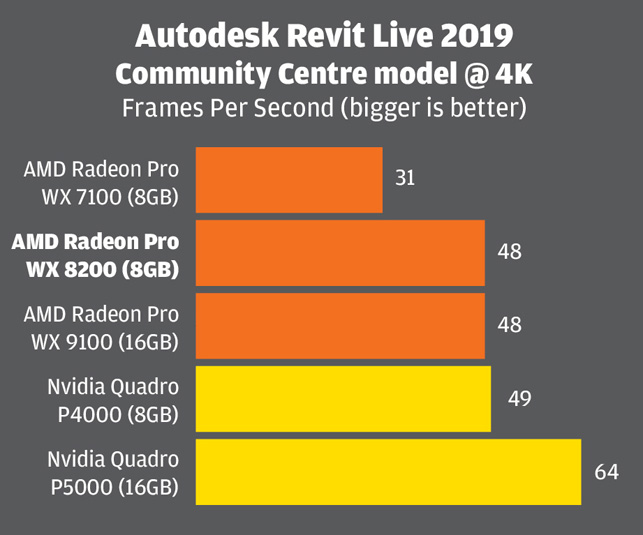

Autodesk Revit Live

We were interested in Autodesk Revit Live, another game engine and VR tool for architects, as it uses DirectX 11 instead of OpenGL. The model we used – a community centre – is not as demanding, and the graphics not as realistic as those in Enscape, but the performance results were very similar.

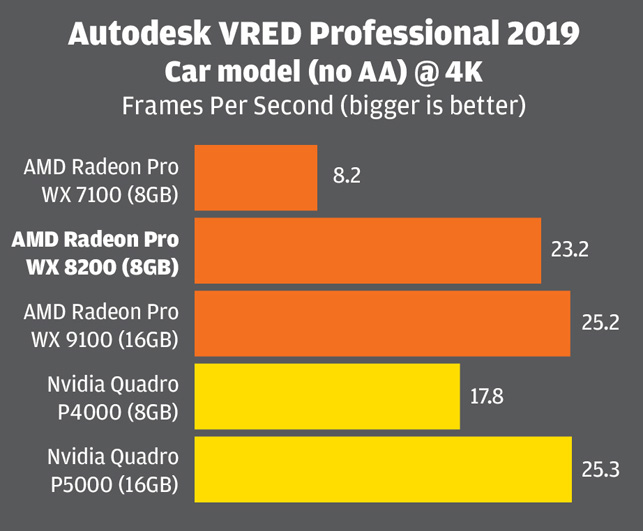

Autodesk VRED Professional

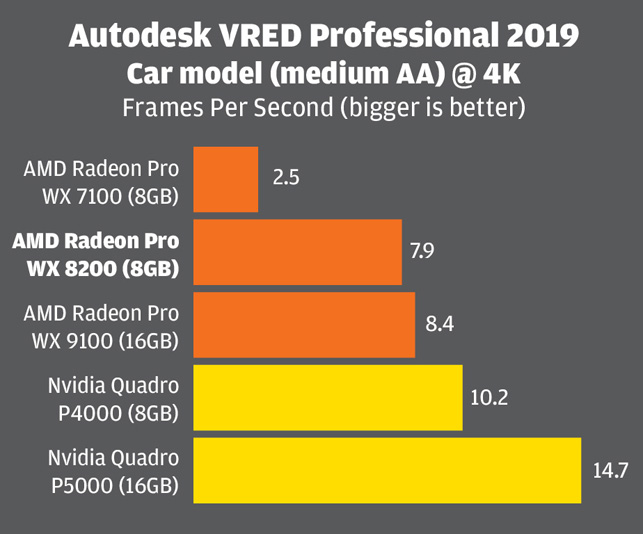

Autodesk VRED Professional 2019 is an automotive-focused 3D visualisation, virtual prototyping and VR tool. It uses OpenGL 4.3 and delivers very high-quality visuals in the viewport. It offers several levels of real time anti-aliasing (AA), which is important for automotive styling, as it smoothes the edges of body panels, but AA calculations use a lot of GPU resources. We tested our automotive model with AA set to ‘off’ (see chart 4), ‘medium’ (see chart 5) and ‘ultra-high’.

When Real time AA was disabled the Radeon Pro WX 8200 had a small but significant lead over the P4000 but fell off the pace a bit when AA was enabled. At 4K, with AA set to Ultra High, even the P5000 struggled, and the model was very choppy in the viewport. In these types of automotive styling workflows, where visual quality is of paramount important, you really need to look at a multi GPU solution.

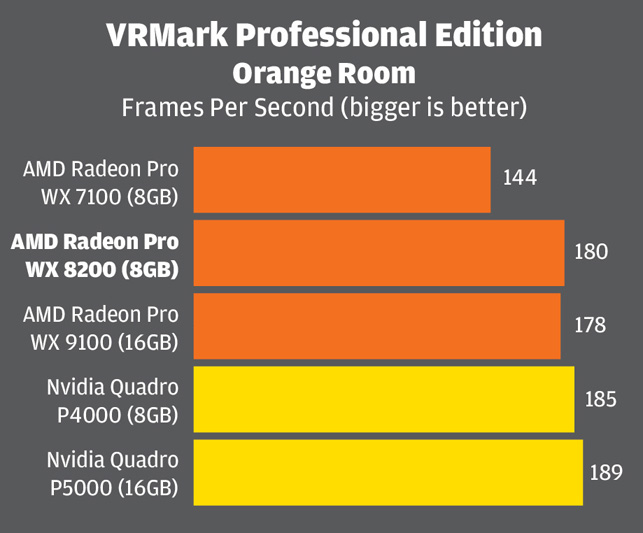

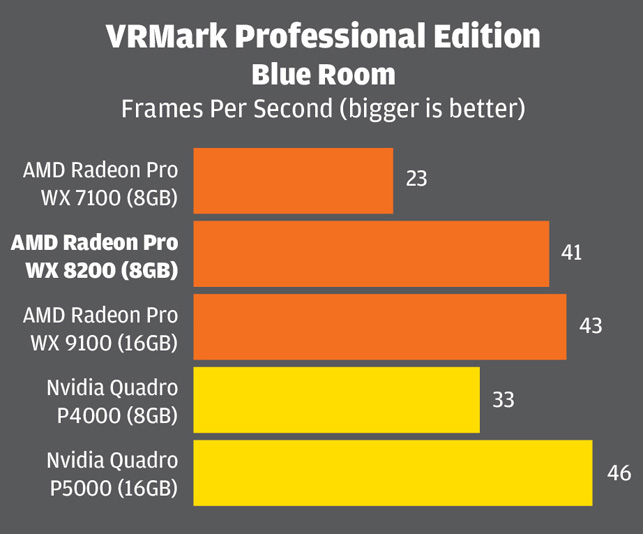

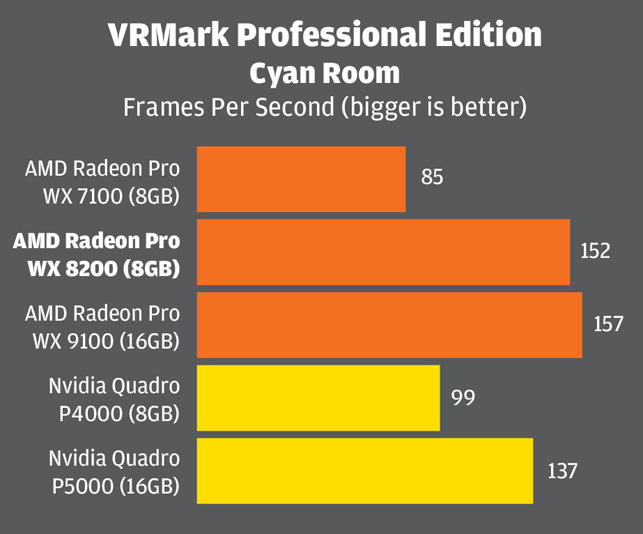

VRMark

We also tested with VRMark, a dedicated Virtual Reality benchmark that uses both DirectX 11 and DirectX 12. It’s biased towards 3D games, so not perfect for our needs, but should give a good indication of the performance one might expect in ‘game engine’ design viz tools, such as Unity and Unreal, which are increasingly being used alongside 3D design tools.

In the DX 11-based Orange Room test the Radeon Pro WX 8200 was a tiny bit behind the P4000 but it showed a significant lead in the more demanding Blue Room test which is designed for next generation VR headsets.

The Radeon Pro WX 8200 also beat both Nvidia GPUs in the DX 12-based Cyan Room test, outperfoming the P4000 by some way. According to AMD, this is because the Vega architecture is designed to perform very well with low-level APIs like DirectX 12, Vulkan and Metal (on OS X). At the moment, pro applications built on these APIs are thin on the ground, so this is more of one for the future, although viz artists could choose to create real time viz and VR experiences using DX 12 as both Unity and Unreal already support the Microsoft standard.

SolidWorks

The WX 8200 is somewhat overpowered for 3D CAD applications like SolidWorks, which tend to be CPU limited and work just as well with an entry-level or mid-range GPU like the Radeon Pro WX 5100.

However, CAD is one of the main reasons one would choose a professional GPU over a consumer GPU as they are certified and optimised for a range of CAD tools. This means there can be stability and performance benefits and access to pro viz features such as RealView in SolidWorks and OIT (Order Independent Transparency) in SolidWorks and Creo, which increase performance and visual quality of transparent objects in the viewport.

We won’t go into any great depth in our SolidWorks testing, as we will be covering this in detail soon in a separate article, but the long and short of it is that all of the professional GPUs tested in this article should be fine for 3D CAD.

With very complex assemblies you might not get the frame rates you want for a fluid viewport experience without relying on ‘Level Of Detail’ optimisation in the CAD tool, but this is not down to the power of the GPU, rather the frequency of the CPU as in most CAD applications the CPU is the bottleneck. In saying that, the new beta graphics engine in SolidWorks 2019 does a great job of reducing the CPU bottleneck and giving higher-end GPUs a chance to use their full power.

GPU rendering

The role of the GPU has changed dramatically over the years and it is now becoming an extremely viable processor for ray trace rendering. There are several rendering tools that can take advantage of AMD GPUs through the open API, OpenCL. This includes AMD’s own renderer, Radeon ProRender, as well as Blender Cycles, V-Ray, Indigo and others.

Radeon ProRender is free and available for SolidWorks, PTC Creo, Blender, 3ds max, Maya and Unreal Engine (beta). It is also built into Cinema4D and Modo (beta) and there’s a Rhino version available on GitHub.

Chaos Group V-Ray, the popular design viz renderer that has plug-ins for a wide range of DDC and CAD tools, support AMD GPUs through an OpenCL implementation. It also supports Nvidia CUDA, although AMD GPUs do not work with renderers that only use CUDA.

Examples of other CUDA-based renderers include SolidWorks Visualize, Dassault Systèmes Catia Live Rendering, Siemens NX Ray Traced Studio or any Nvidia Iray plug-in.

Radeon ProRender for SolidWorks

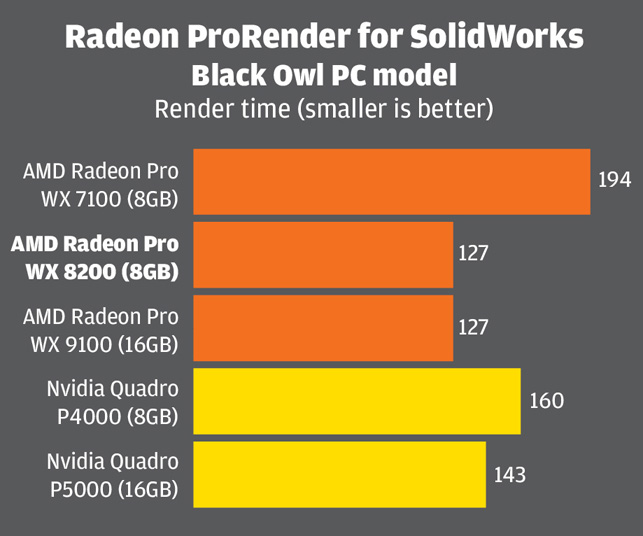

For our testing we used Radeon ProRender for SolidWorks which is based on OpenCL 1.2. Using the Black Owl PC model from the SPECapc SolidWorks 2017 benchmark we recorded the time it took to resolve the render 1,000 times at 800 x 600 resolution, with the render quality set to low.

The Radeon Pro WX 8200 stood shoulder to shoulder with the WX 9100 and had a clear lead over both Nvidia GPUs, completing the scene 11 per cent faster than the P5000. This wasn’t entirely unexpected as Nvidia puts more development resources into CUDA than OpenCL and, of course, Radeon ProRender is also developed by AMD.

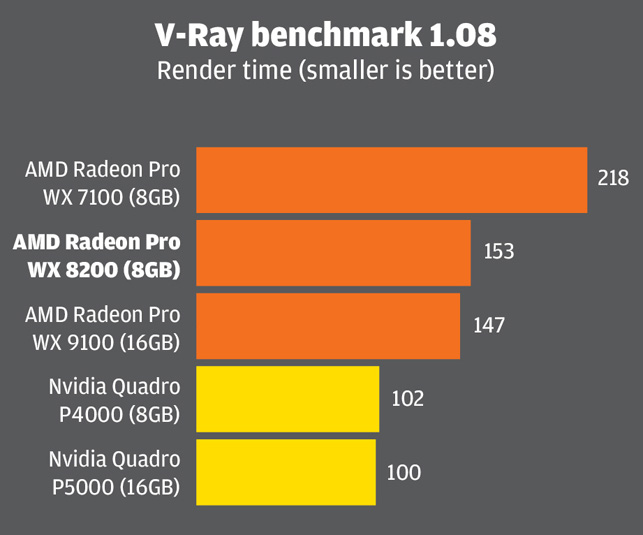

V-Ray

We also tested the GPUs with the freely downloadable V-Ray benchmark. Here, Nvidia has quite a substantial lead. While it gives a great idea of the relative performance one would expect in V-Ray, Nvidia GPUs are always likely to do better here as Chaos Group has done significantly more development work on the CUDA engine than it has on the OpenCL engine.

In short, GPU rendering performance is very dependent on the software, as it is with 3D graphics.

Radeon Pro WX 8200 – multitasking

Rendering a scene with a CPU-based ray trace renderer used to mean the perfect excuse for a cup of tea. There was no point in soldiering on with other work as the entire workstation would grind to a halt. But now it’s easy to restrict the number of CPU cores the renderer uses, leaving some cores free for other tasks. Core allocation can even be done from within the rendering software itself.

Most GPU rendering applications don’t have that same granularity, but thanks to AMD’s Graphic Core Next (GCN) architecture, with its asynchronous compute engine, they don’t necessary need to. Like all AMD Radeon Pro GPUs, the Radeon Pro WX 8200 was designed from the ground up to mix 3D graphics and compute tasks (such as ray trace rendering) and dynamically switch between them.

In practice, if you try to rotate your 3D model in the viewport while ray trace rendering on the same GPU, the driver instantly pauses some of compute tasks, lets you move your model into position, then re-starts as soon as you stop. And it seems to do this very well.

We explored this feature by running the V-Ray benchmark, and then, with the GPU running flat out at 100%, see how it responded when we tried to rotate a 3D model in SolidWorks and Autodesk VRED.

In SolidWorks, the viewport responded instantly to the movement of our mouse and felt fluid, both in shaded with edges and RealView display modes. Frame rates were exactly the same, regardless of whether the V-Ray benchmark was running or not, which was impressive: 20.01 FPS in shaded with edges mode and 13.13 with RealView, shadows and Ambient Occlusion enabled.

In contrast, with the P4000, frame rates dropped quite dramatically from 26.34 to 10.02 in shaded with edges mode and from 16.70 to 6.31 with Realview, shadows and Ambient Occlusion enabled. In addition, at times, the viewport was unresponsive, and the model stuttered.

SolidWorks, as with most 3D CAD applications, is CPU limited so only uses a fraction of the GPU’s resources. So to give the WX 8200 more of a challenge we did the same test with Autodesk VRED Professional, which uses 100% of the GPU when moving a model in the viewport.

The viewport also responded instantly, but performance was impacted quite substantially. At FHD resolution with no anti-aliasing, frame rates dropped from 51.3 to 13.13. Things were still fairly fluid but, at higher resolutions or with AA enabled, we would expect it to impact the experience significantly. But the P4000 suffered more, and frames rates dropped from 48.55 to 7.7 and the viewport became choppy. The P5000 was even worse, dropping from 65.4 all the way down to an unusable 3.00.

But what impact does the WX 8200’s ability to multitask have on render speeds? In short, very little. In real world worfklows, graphics tasks tend to come in short bursts. The designer may reposition a model so she can work on a different face or zoom into an assembly to model some details. It’s not like a 3D game where the GPU is running flat out all the time as you bear down on your enemies.

We tested this out in SolidWorks and VRED, performing 20 separate rotate and zoom operations in each application over a two-minute period as the V-Ray benchmark ran in the background. This only increased the render time by 2 seconds and 10 seconds respectively (or 1.5% and 6%).

Use cases in design review or project presentations might be a little different – an architectural walkthrough for example – but in these situations you’d probably want to pause the render anyway to ensure you give your team or your client the very best experience. However, we thought we’d try this out anyway. Spinning the car model in VRED for the entire duration of the render only extended the render time by 53 seconds (or 37%).

With V-Ray itself, as opposed to the V-Ray benchmark, it is possible to adjust the load on the GPU to help keep the viewport responsive. This is done by reducing the Rays per pixel and/or the Ray bundle size which essentially breaks up the data passed to the GPU into smaller chunks. Chaos Group says this will reduce the rendering speed, although we don’t know by how much.

Radeon Pro WX 8200 – pushing the memory limits

GPU memory is becoming more and more important, particularly if you intend to use the GPU for both compute and graphics tasks. And while 8GB may have appeared to be a lot a few years ago, it is now considered ‘entry-level’ for viz-focused workflows.

To give this some context, simply loading up our large Enscape model uses 5GB at FHD and 6.8GB at 4K resolution, leaving little space for much else. Even SolidWorks 2019, with its new OpenGL 4.5 graphics engine, can easily use 3GB or 4GB on a single model. And if you run multiple applications, each with their own datasets, GPU memory soon gets devoured.

For demanding workflows, the obvious solution is to invest in a GPU with more memory. However, 16GB GPUs, such as the Radeon Pro WX 9100 or Quadro P5000, are significantly more expensive, and 32GB GPUs even more so.

But AMD has a trick up its sleeve to help users get more out of its Radeon Pro WX 8200 and WX 9100 by extending the practical limits of GPU memory.

AMD holds a very big trump card when it comes to multi-tasking. It has done an excellent job of turning the humble ‘graphics card’ into a true multi-purpose processor

With most GPU architectures, when GPU memory becomes full, applications crash. AMD’s Vega, on the other hand, features a HBCC (High-Bandwidth Cache Controller) that allows GPU memory to spill over into system memory, in much the same way data pages to the hard drive when system memory becomes full.

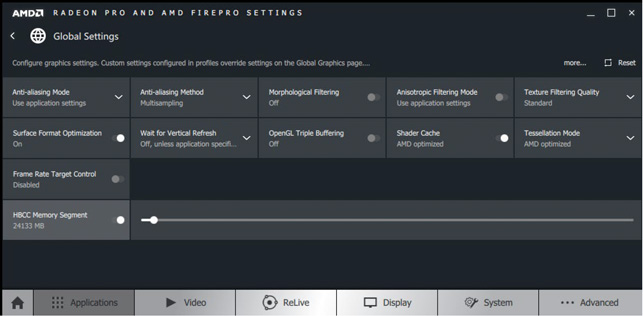

The size of this cache is controlled in the Radeon Pro driver, using a slider. It can be as big as the workstation’s entire system memory, although this is probably unadvisable or indeed beneficial.

To test it out we launched two separate processes that we knew, when combined, would use up more than the 8GB on the GPU – viewing our Revit Live test model at FHD resolution (4GB) and rendering our SolidWorks computer model in Radeon ProRender at 4K resolution (6.4GB). Using GPU-Z, a free tool that measures GPU resource utilisation, the GPU was shown to be using a total of 10.4GB.

Even though this was 2.4GB more than the GPU’s 8GB of physical memory, we were able to run the ProRender render and smoothly manipulate the Revit Live model at the same time. On the P4000 this simply wasn’t possible as Radeon ProRender crashed as soon as Revit Live became the active application. To push things further we attempted to fill the GPU with over 12GB of data, but this ended up freezing our system.

More experimentation is needed to find out the practical limits and benefits of HBCC – and that’s the subject of a whole different article – but it looks like it’s probably best suited to giving you a bit of additional headroom and not as a means of getting a 32GB GPU on the cheap. Even if it could handle such large datasets, performance would probably suffer considerably.

Radeon Pro WX 8200 – remote workstation

Flexible working is on the rise and long gone are the days when work ends as soon as you leave the office. AMD now includes a remote workstation capability for its Radeon Pro GPUs, which allow you access your 3D workstation from almost anywhere, on any device. Using a home PC, laptop or even a tablet, you basically get a window into your workstation with full 3D graphics acceleration, so you can spin your 3D models as if you were sat at your desk.

The Radeon Pro WX 8200’s remote workstation capability is built into the core Radeon Pro driver and then there are then a few additional requirements: Citrix XenDesktop Virtual Delivery Agent (VDA) needs to be installed on the workstation and Citrix Receiver on the client device, so there is a licensing cost to consider.

We didn’t test out this capability, but Citrix is a proven technology and on paper it looks like a simple way to extend the reach of your desktop workstation – or even create a dedicated server for running 3D applications in a virtualized environment. The same technology is being used in the new HPE Edgeline EL4000 Engineering Workstation (EWS), which features Radeon Pro WX 4100 GPUs

Capturing the moment

Radeon Pro GPUs include a ‘professional-grade’ screen capture and recording software tool called ReLive. The utility was originally developed to capture and stream 3D games, but it also has professional applications; users can easily create videos for presentations and tutorials. The software provides full control over resolution and quality and you can also trim videos to length. The entire desktop can be captured, as well as specific regions or windowed applications. All in all, it’s a neat little utility.

Conclusion

The Radeon Pro WX 8200 is an interesting proposition for workstation users. By offering a slightly cut down version of the Radeon Pro WX 9100 at an attractive sub $1,000 price point, AMD is now better able to compete with Nvidia’s Quadros when it comes to price/performance.

The Radeon Pro WX 8200 appears to do extremely well in OpenCL renderers and DirectX 12 applications, where it even beats the considerably more expensive Nvidia Quadro P5000. It does OK in OpenGL and DirectX 11 applications, generally sitting somewhere between the P4000 and P5000, but sometimes plays second fiddle to the Quadro P4000, which is over £100 cheaper and consumes much less power. In such workflows, one can only expect Nvidia’s lead to increase with the new Quadro RTX 4000, although we don’t yet know how much this soon-to-be-released GPU will cost.

But AMD holds a very big trump card when it comes to multi-tasking. It has done an excellent job of turning the humble ‘graphics card’ into a true multi-purpose processor, allowing users to swap seamlessly between graphics and compute tasks. Try rendering on an Nvidia GPU and then spinning a model in your 3D design tool and your experience will likely be choppy in comparison. Users can get around this with multi-GPUs, where one is dedicated to graphics and the other to compute, but that’s an expensive way to solve the problem and will mean one of your GPUs sits idle some of the time. Some renderers allow you to reduce the load on GPU, but this will extend the rendering times and you won’t be taking full advantage of the GPU, even when you’re not spinning your 3D model.

As always with pro applications, it all boils down to workflows. Nvidia might currently be the Usain Bolt of 3D graphics and the favourite in a flat race. But if you want to juggle circus balls, while powering down the back straight, our money would be on AMD.

This article is part of a DEVELOP3D workstation special report. To read articles and reviews in this report click on the links below.

Review: SolidWorks 2019 – getting more out of your GPU

Review: Dell Precision 5530 2-in-1

Your workstation is never fast enough

Review: Samsung portable SSD X5